At some point I’m going to sound like a broken record when I keep saying how amazing the experiences of each of these visits are. There are only so many superlatives that can be thrown around until they start to lose their meaning. Before that happens, however, we should reserve some for Dr Nanthia Suthana’s lab, because it was an amazing tour with a remarkably generous host and obliging postdocs, each ready to engage with visitors to discuss their work and their equipment. (To say that they were incredibly knowledgeable about everything would be to state the obvious.)

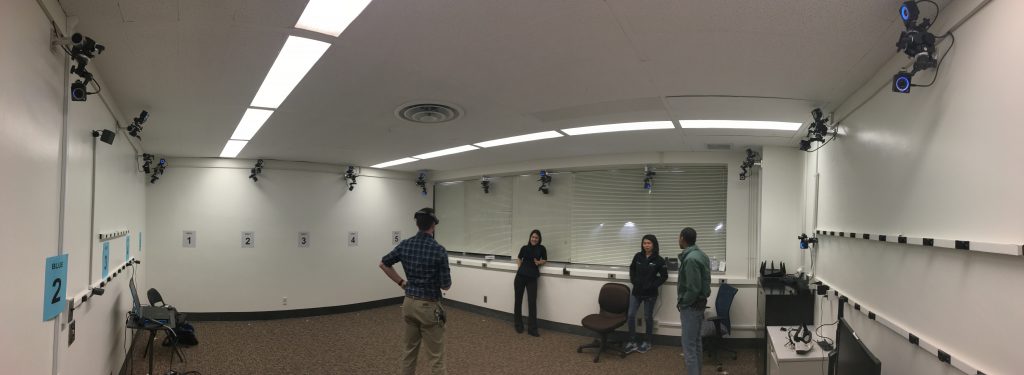

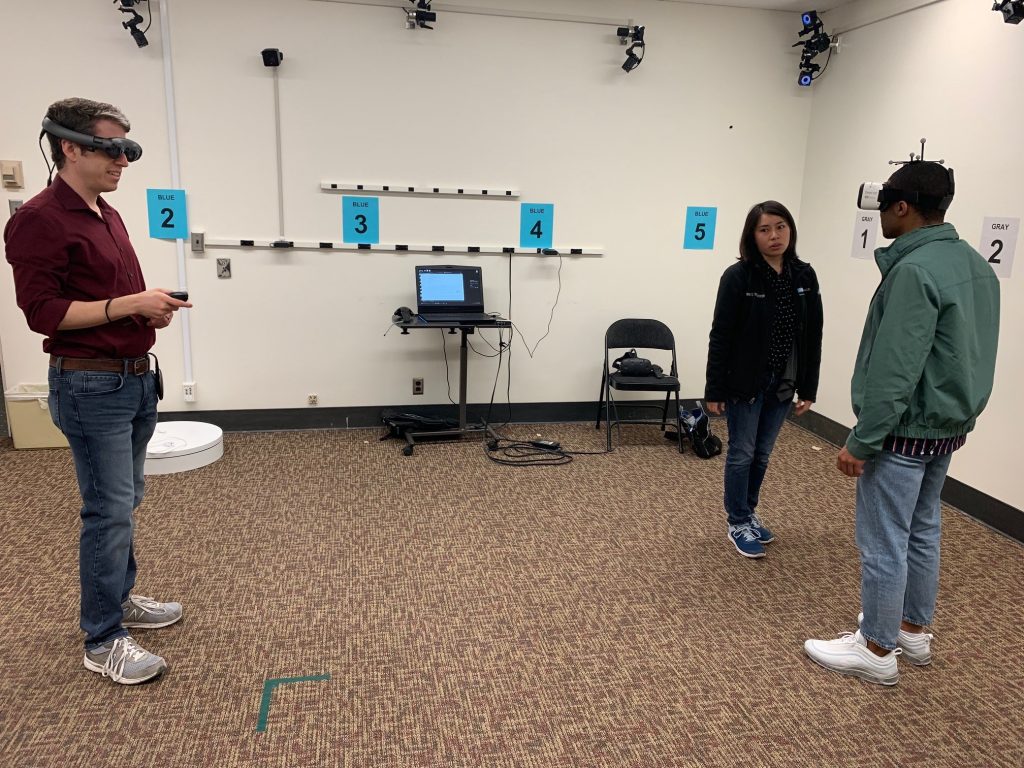

Visitors are immediately struck by the large, open space surrounded by 24 infrared base stations placed throughout the room. These sensors are integrated with all of the VR equipment in the space, and are used to not only set virtual boundaries, but are also meant to track markers. They are capable of sub-millimeter motion tracking, so don’t move. Or do move, depending on what they need.

The entire space boasts an impressive amount of hardware. Some of it includes:

- 24 OptiTrack base stations

- A Magic Leap AR headset

- Microsoft Hololens

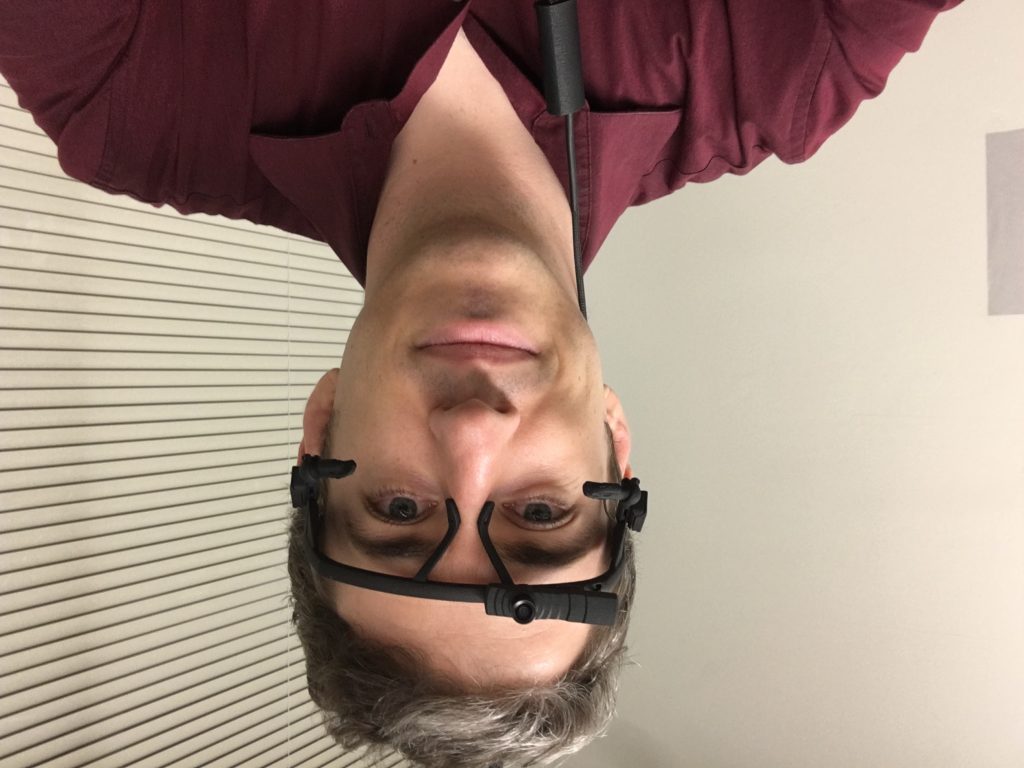

- HTV Vive complete with a Tobii eye tracking attachment

- Samsung VR Headset with SMI eye tracking

- Motion capture suits of various sizes

- Eva Artec Scanner and automatic rotating platform

- BioPac – state of the art biometric measurements

- ANT Neuro eego sport 64 channel mobile EEG system

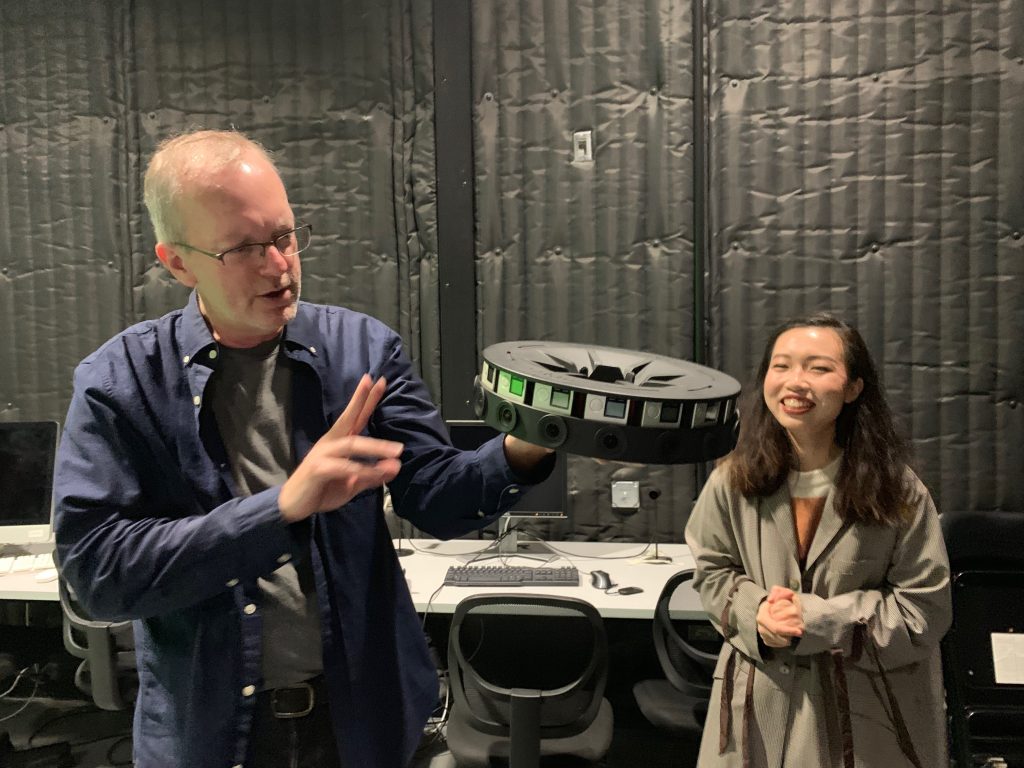

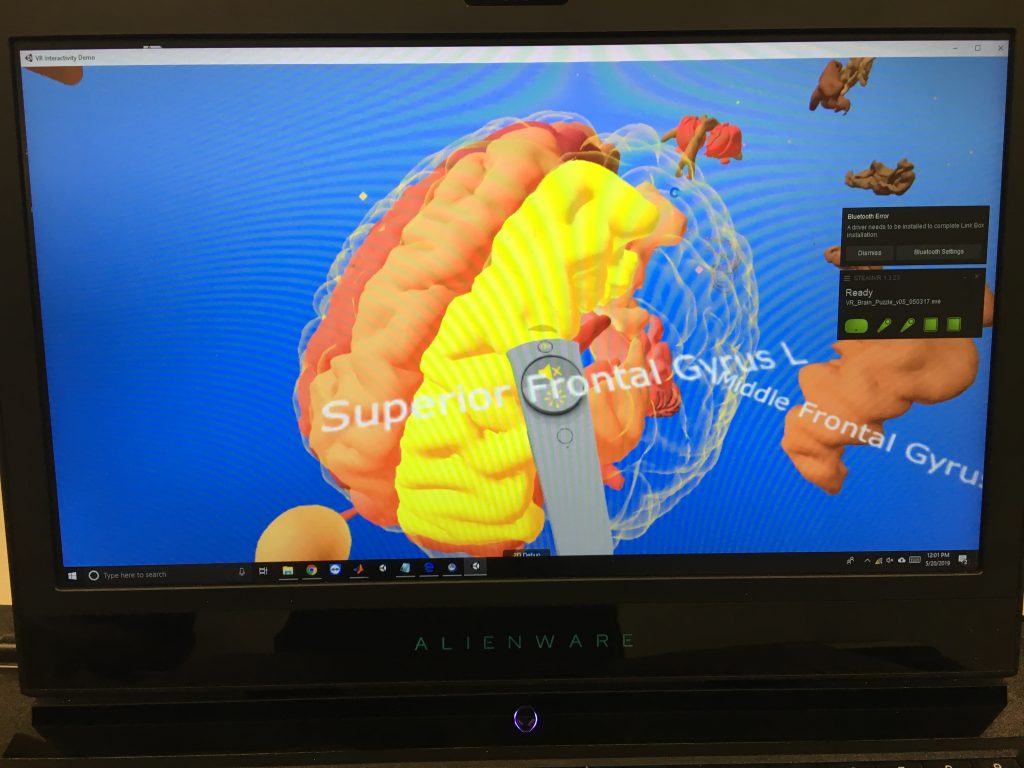

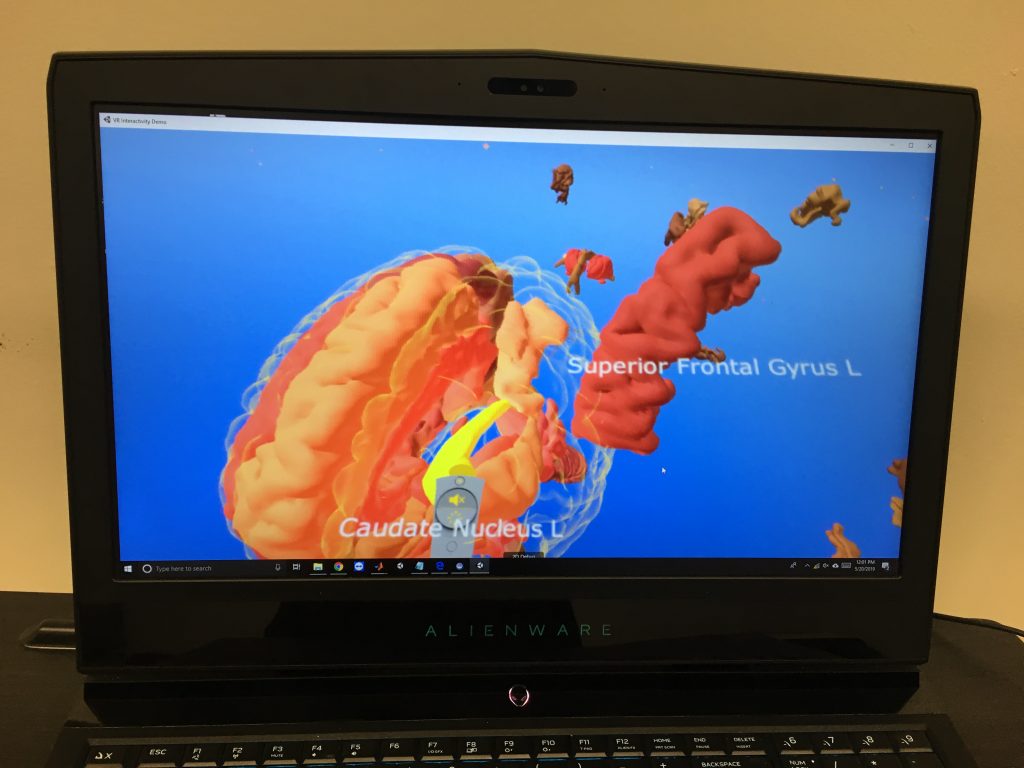

So what do they do with all this stuff? Well, a whole hell of a lot as it turns out. For starters, the Vive headset station is a great example of cross-campus collaboration. The environments created for the Vive are for educational purposes. Yea, you guessed it, they’re teaching folks about the brain.

The Magic Leap was probably my favorite experience. I’d never really done AR before, and this was probably one of the best environments in which to do it. I mean, I don’t know about you, but I don’t have 24 OptiTrack base stations at home. The 18 that I have just don’t seem to cut it.

In addition to various AR environments that I got to experience with the Magic Leap, the most impressive thing about the lab is the research that they are conducting.

Patients with epilepsy are sometimes given an implant that resides within the skull, on the brain, with wires that go into the brain itself. Now, I don’t know if you know this, but I’m not a neuroscientist – I don’t even know if I spelled that correctly. But from what I could gather, these wires are meant to stimulate the brain in such a way as to alleviate epileptic episodes.

That’s all well and good, but these implants were traditionally just one-way streets. Nowadays, many implants are of a new, recently-FDA-approved, variety. These implants perform the same functions as the aforementioned ones (that is, if I got that right in the first place), but are a two-way street. They can be connected to from the outside, and they can send data about what’s going on inside the brain. Again, not a neuroscientist, but basically these wires send electric signals into the brain but also can read and send out information about what’s going on deep inside the brain.

Some of the environments developed for this implant research revolve around memory. I, of course, passed the test with flying colors but I don’t remember what my score was.

Subjects would wear special caps that could read the information being sent by the implant’s wires that were deep in the brain. The wires were in the hippocampus or hippopotamus or something to study memory as subjects engaged in an AR environment designed to test their memory.

Special thanks to Cory Inman, PostDoc researcher, Diane Villaroman the resident programmer analyst, and Sonja Hiller, research assistant and lab manager.

Definitely some interesting research going on here, and if you can think of some kind of partnership or research project, don’t email me, email Dr Suthana.