GRANT PROGRAM

for more info and to access the application form click here

I. PURPOSE

Grant Guarantee Program for Post-Pandemic Recovery Assistance

EON Reality’s grant program provides academic institutions and governmental organizations with support to fund the launch of new XR programs in the post-pandemic world. Based on decades of experience working with governments and intergovernmental agencies, EON Reality helps to identify and locate grants to cover a significant amount of the cost of a new XR Center for qualifying partners. The EON Reality Inc (EON) Grants Program is intended to provide support for the following purposes:

- Post Pandemic Recovery

- XR Deployment at Scale

- Social Development

- Localized Content creation

II. ELIGIBILITY

Only invited candidates are eligible for the EON Grant Guarantee Program. The Program has been developed for organizations that are seeking XR based EdTech solutions at Scale and lack funding to launch organization wide rollouts of XR programs in the post-pandemic world.

III. HOW DOES IT WORK?

If the Application is approved, EON Learn of Life issues a Certificate of Approval and EON Reality and the Applicant sign an Offer Letter to set up an EON-XR Center as follows:

- EON Reality delivers the EON-XR platform for up to 5000 students and 750 work/internships upfront as per the EON-XR Center equipment list.

- EON guarantees 100% of the EON-XR Center funding, 78% with an EON Co investment and 22% with a Donation Guarantee from EON Reality Learn for Life.

- In conjunction with EON Reality’s delivery of EON-XR platform, the Applicant pays a one-time Grant Guarantee Fee of 1% of the EON-XR Center value ($254,122).

- Once the Offer Letter is signed, EON and the Applicant aim to secure an additional grant of $6.7 million of which $1.3 million will be provided to the Applicant as a cash grant to expand the XR operation and the balance covers the donation guarantee amount.

IV. GRANT PROCESS

Only applications received by April 30th, 2021 will be considered. The seven steps grant procedure is as follows:

- Introduction Meeting – Introduction of the EON-XR Center Grant Guarantee Program (GGP).

- Application Submission – Submission of Online Application, there are no financial obligations, binding commitments, or any liabilities with submitting the application.

- Committee Determination – All applications are reviewed by the GGP Committee and subject to determination within 10 days after receipt.

- Certificate of Approval – If the application is approved, a Certificate is issued for an amount between $5-25 million USD to establish an EON-XR center for up to 5000 students and 750 work/internships based on the merits of the Application.

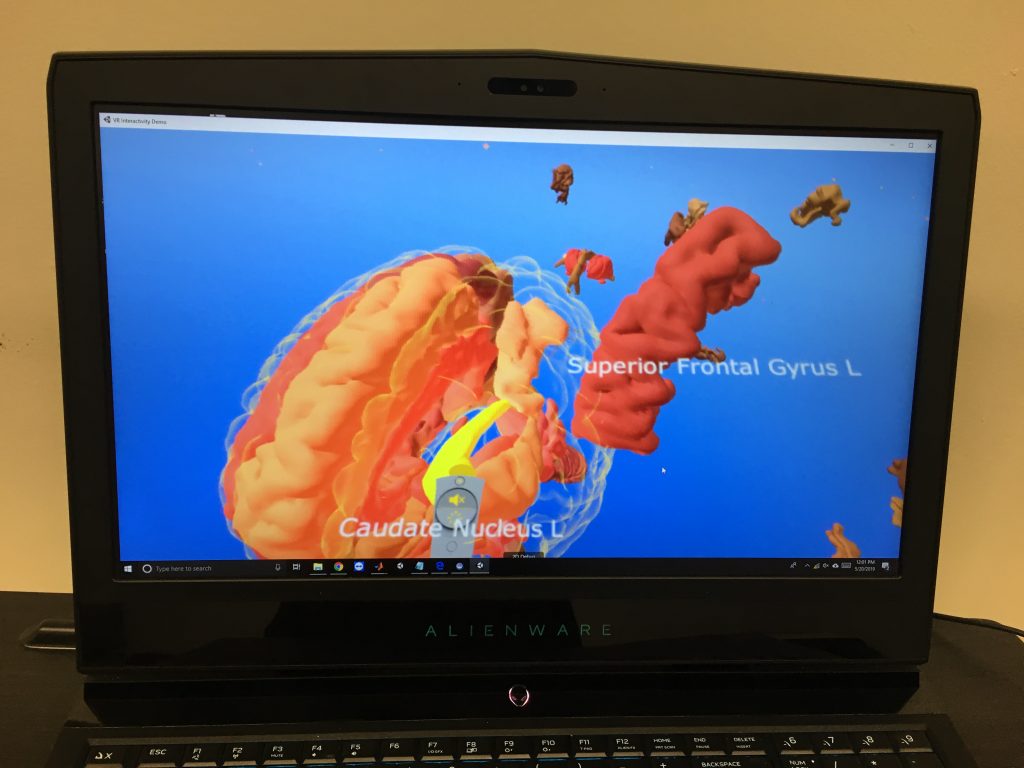

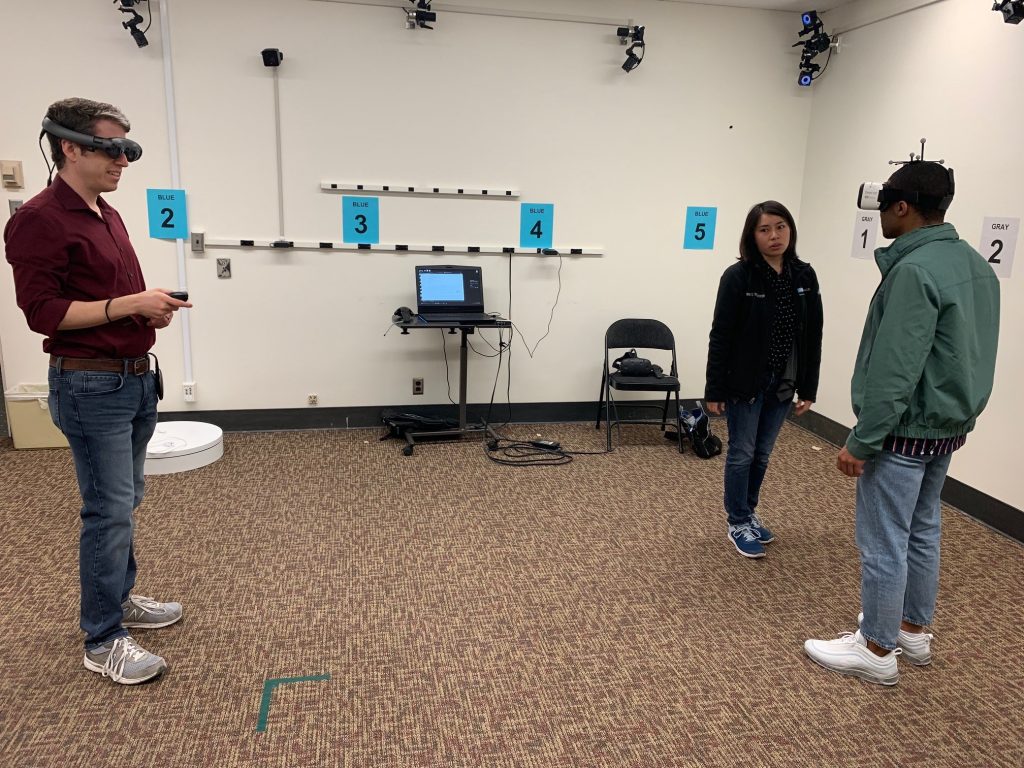

- XR Solution Meeting – Deep Dive EON-XR Platform demonstration for XR deployment at scale.

- Offer Letter – After being approved, the Applicant receives the Offer Letter. The Applicant has up to 45 days to approve or decline the Offer Letter and Grant Guarantee.

- Delivery and Implementation Plan – EON delivers the EON-XR Center along with the pedagogical and technical XR experts and start implementing the Academic EON-XR Program.

The Certificate of Approval is valid for 60 days. If the Offer Letter is not signed by both parties within 60 days, the Certificate of Approval expires, and the grant is repatriated to the Learn for Life Foundation for the next Applicant.

V. REVIEW AND AWARD

The Grant Guarantee Program Committee, consisting of EON’s Advisory members, will determine the overall quality of each Grant application. Applications will be evaluated in accordance with the criteria outlined below. Additional consideration will be given to the Applicant’s potential for carrying out the project, the time commitment, and the adequacy of the justifications presented.

All applications will be forwarded to the Grant Guarantee Program Committee for final approval and be reviewed within 10 days after receipt. If recommended for approval, it will be assigned a score by the Committee which will determine the amount to be received (within a range of $5-25 million USD) based on the merits of the application.

There are no financial obligations, binding commitments, or any liabilities with submitting the application. If your organization gets approved, you will receive a certificate which is valid for 2 months. During this time, you can approve or decline the offer letter and grant. After receiving your application, EON Reality will take time to go through the agreement with you.

For additional information, contact:

EON Reality Inc.

18 Technology Drive, Suite 110

Irvine, CA 92618

United States

949.460.2000

dan@eonreality.com

VI. EVALUATION CRITERIA

Once your application is admissible and eligible, EON’s Grant Guarantee Program Committee follow the below evaluation criteria during the evaluation.

Proposals are evaluated and scored against selection and award criteria – organization excellence, Post pandemic Recovery impact, quality and quantity of the XR Deployment at Scale, Social Development & focus on creation of job opportunities for youth and build entrepreneurship opportunities in digital economy and Localized Content creation.

VII. SCORES

The Grant Guarantee Program Committee will score each award criterion on a scale from 0 to 5 (half point scores may be given):

0 – Proposal fails to address the criterion

1 – Poor. The criterion is inadequately addressed or there are serious inherent weaknesses.

2 – Fair. The proposal broadly addresses the criterion, but there are significant weaknesses.

3 – Good. The proposal addresses the criterion well, but a number of shortcomings are present.

4 – Very good. The proposal addresses the criterion very well, but a small number of shortcomings are present.

5 – Excellent. The proposal successfully addresses all relevant aspects of the criterion. Any shortcomings are minor.